Proteja seu espaço digital com o Detector Deepfake on-line da DeepBrain AI, projetado para identificar com rapidez e precisão o conteúdo gerado pela IA em minutos.

Reconheça facilmente vídeos deepfake avançados que são difíceis de detectar a olho nu.

Desenvolvida por algoritmos avançados de aprendizado profundo, a ferramenta de identificação deepfake do DeepBrain AI examina vários elementos do conteúdo de vídeo para diferenciar e detectar com eficácia diferentes tipos de manipulações de mídia sintética.

Carregue seu vídeo e nossa IA o analisará rapidamente, fornecendo uma avaliação precisa em cinco minutos para determinar se ele foi criado usando a tecnologia deepfake ou AI.

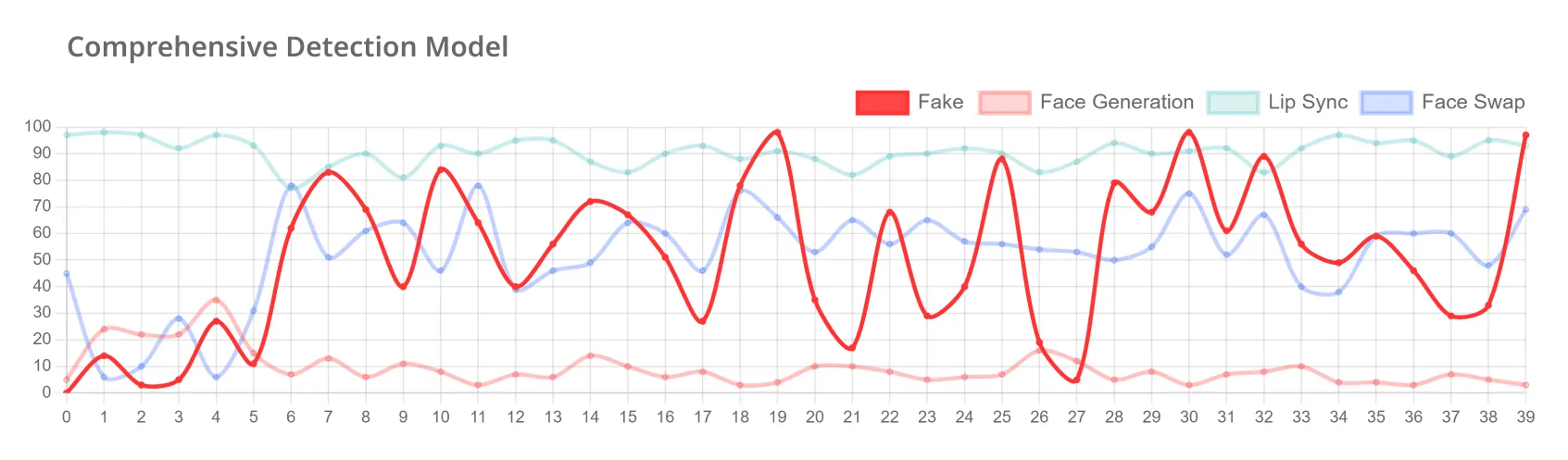

Detectamos com precisão várias formas deepfake, como trocas de rosto, manipulações de sincronização labial e vídeos gerados por IA, garantindo que você interaja com conteúdo autêntico e confiável.

Detecte vídeos e mídias manipulados com rapidez e precisão para se proteger contra uma ampla variedade de crimes de deepfake. A solução de detecção da DeepBrain AI ajuda a evitar fraudes, roubo de identidade, exploração pessoal e campanhas de desinformação.

Avançamos continuamente em nossa tecnologia para combater os deepfakes, proteger grupos vulneráveis e fornecer informações acionáveis contra a exploração digital. Estamos comprometidos em capacitar as organizações a proteger a integridade digital de forma eficaz.

Fornecemos nossas soluções e fazemos parcerias com as autoridades policiais, incluindo a Agência Nacional de Polícia da Coreia do Sul, para melhorar nosso software de detecção de deepfake para respostas mais rápidas a crimes relacionados.

O DeepBrain AI foi selecionado pelo Ministério da Ciência e TIC da Coreia do Sul para liderar o projeto “Deepfake Manipulation Video AI Data” em colaboração com o Laboratório de Pesquisa de IA da Universidade Nacional de Seul (DASIL).

Oferecemos uma demonstração gratuita de um mês para empresas, agências governamentais e instituições educacionais para combater crimes de vídeo gerados por IA e aprimorar suas capacidades de resposta.

Confira nossas perguntas frequentes para obter respostas rápidas sobre nossa solução de detecção de deepfake.

Um deepfake é uma mídia sintética criada usando técnicas de inteligência artificial e aprendizado de máquina. Normalmente, envolve a manipulação ou a geração de conteúdo visual e de áudio para fazer parecer que uma pessoa disse ou fez algo que não fez na realidade. Os deepfakes podem variar de trocas de rosto em vídeos a imagens ou vozes totalmente geradas por IA que imitam pessoas reais com um alto grau de realismo.

A solução de detecção de deepfake da DeepBrain AI foi projetada para identificar e filtrar conteúdo falso gerado pela IA. Ele pode detectar vários tipos de deepfakes, incluindo trocas de rosto, sincronização labial e vídeos gerados por inteligência artificial/computador. O sistema funciona comparando conteúdo suspeito com dados originais para verificar a autenticidade. Essa tecnologia ajuda a evitar possíveis danos causados por deepfakes e apoia investigações criminais. Ao sinalizar rapidamente o conteúdo artificial, a solução da DeepBrain AI visa proteger indivíduos e organizações contra ameaças relacionadas ao deepfake.

Cada sistema de detecção de deepfake usa técnicas diferentes para identificar conteúdo manipulado. O processo de detecção de deepfake do DeepBrain AI utiliza um método de várias etapas para verificar a autenticidade:

Essa abordagem de várias etapas permite que o DeepBrain AI analise minuciosamente vídeos, imagens e áudio para determinar se eles são genuínos ou criados artificialmente.

A precisão da tecnologia de detecção de deepfake da DeepBrain AI varia conforme a tecnologia se desenvolve, mas geralmente detecta deepfakes com mais de 90% de precisão. À medida que a empresa continua avançando em sua tecnologia, essa precisão continua melhorando.

A solução deepfake atual da DeepBrain AI se concentra na detecção rápida em vez do bloqueio preventivo. O sistema analisa rapidamente vídeos, imagens e áudio, normalmente fornecendo resultados em 5 a 10 minutos. Ele categoriza o conteúdo como “real” ou “falso” e fornece dados sobre taxas de alteração e tipos de síntese.

Com o objetivo de mitigar os danos, a solução não remove nem bloqueia automaticamente o conteúdo, mas notifica as partes relevantes, como moderadores de conteúdo ou indivíduos preocupados com a falsificação de identidade de deepfake. A responsabilidade pela ação é dessas partes, não da DeepBrain AI.

O DeepBrain AI está trabalhando ativamente com outras organizações e empresas para tornar o bloqueio preventivo uma possibilidade. Por enquanto, suas soluções de detecção ajudam a analisar conteúdo suspeito e auxiliam na investigação de vídeos falsos de deepfake para reduzir ainda mais danos.

As principais empresas de tecnologia estão respondendo ativamente à questão do deepfake por meio de iniciativas colaborativas que visam mitigar os riscos associados ao conteúdo enganoso de IA. Recentemente, eles assinaram o “Acordo tecnológico para combater o uso enganoso da IA nas eleições de 2024” na Conferência de Segurança de Munique. Esse acordo compromete empresas como Microsoft, Google e Meta a desenvolver tecnologias que detectem e combatam conteúdo enganoso, particularmente no contexto de eleições. Eles também estão desenvolvendo técnicas avançadas de marca d'água digital para autenticar conteúdo gerado por IA e firmando parcerias com governos e instituições acadêmicas para promover práticas éticas de IA. Além disso, as empresas atualizam continuamente seus algoritmos de detecção e aumentam a conscientização pública sobre os riscos do deepfake por meio de campanhas educacionais, demonstrando um forte compromisso em enfrentar esse desafio emergente.

Embora as principais empresas de tecnologia estejam avançando para combater os deepfakes, seus esforços podem não ser suficientes. A grande quantidade de conteúdo nas mídias sociais torna quase impossível capturar todas as instâncias de mídia manipulada, e deepfakes mais sofisticados podem evitar a detecção por períodos mais longos.

Para indivíduos e organizações que buscam proteção adicional, soluções especializadas como o DeepBrain AI oferecem uma camada valiosa de segurança. Ao analisar continuamente a mídia da Internet e rastrear indivíduos específicos, o DeepBrain AI ajuda a mitigar os riscos associados aos deepfakes. Em resumo, embora as iniciativas do setor sejam importantes, uma abordagem multifacetada que inclui ferramentas especializadas e conscientização pública é essencial para enfrentar com eficácia o desafio do deepfake.